Enterprise AI Agent Governance for Oracle Fusion: Security Controls + ROI Scorecard (Avoid “Agent Washing”)

Section

Table of Contents

- Introduction

- Define “AI agent” in Oracle Fusion and avoid agent washing

- Control model for Oracle Fusion agents: Identity, roles, and tool permissions

- Runtime risk controls: Safe actions, integration complexity, and threat-driven guardrails

- Evidence pack plus ROI scorecard: Measurable controls and measurable value

- Conclusion

- FAQs (Frequently Asked Question)

- Organizations must distinguish true AI agents from basic automation to avoid the costs and risks of “agent washing.”

- This blog maps agent governance directly to Oracle Fusion’s native security constructs, including RBAC and audit reports.

- It defines a strict control model that separates “builder” and “runner” permissions to enforce least-privilege access.

- The included ROI scorecard helps IT leaders measure cycle time reduction and audit readiness alongside operational costs.

Introduction

CIOs evaluating Oracle Fusion AI agents rarely ask for generic productivity boosts. They demand specific, measurable outcomes: faster invoice processing in ERP; reduced manual exceptions in Human Capital Management (HCM); and strict, audit-ready controls for every automated action. The market pushes excitement, but enterprise IT requires reliability.

Governance has become a critical delivery requirement. Without clear boundaries, organizations face “agent sprawl” and the rising risk of “agent washing.”

This blog outlines a practical AI agent governance framework mapped directly to Fusion security constructs, including roles, privileges, and audit reports. We also introduce a simplified AI Agent ROI scorecard to help security and finance teams review controls alongside business value. You will leave with an access control checklist, an audit evidence pack, and a template to measure returns effectively.

Define “AI agent” in Oracle Fusion and avoid agent washing

For this framework, an agent is software capable of planning steps and executing actions within Fusion workflows by invoking tools or APIs, not merely generating text. This definition relies on measurable side effects, such as creating invoices or updating personnel files, rather than conversational ability.

Agent washing complicates enterprise buying. Vendors frequently rebrand standard chat interfaces or rule-based scripts as “agents.” This marketing tactic leads to misaligned controls; a deterministic script requires different oversight than a probabilistic agent. Organizations must filter these claims to apply the correct risk models.

The Oracle AI Agent Marketplace introduces specific governance pressures. Partners provide agent templates that teams can customize with specific tools, data sources, and model choices. This flexibility demands strict boundaries early in the project lifecycle:

- Domains: Explicitly define if the agent operates within ERP, HCM, SCM, or CX.

- Actions: Distinguish between read-only inquiries and write-capable tasks.

- Channels: Specify where agents can be invoked and restrict who holds the authority to publish changes.

Govern Your AI Agents Now

Control model for Oracle Fusion agents: Identity, roles, and tool permissions

Effective AI agent access control relies on precise identity management. Access to Oracle AI Agent Studio is granted by assigning specific duty roles to job roles, necessitating prerequisites such as permission groups and security imports. This foundation dictates who can view, edit, or delete agent configurations.

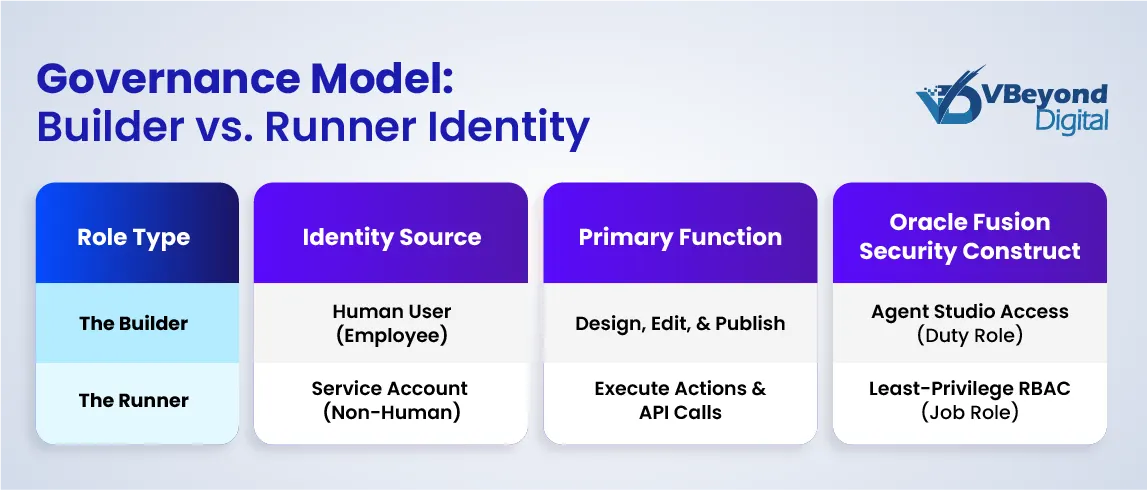

Security architects must separate “builder” and “runner” concerns. Builder permissions govern who can create or modify agents and templates within the studio. Runner permissions define what data and functions the agent can access at runtime. These must map directly to Fusion Role-Based Access Control (RBAC) and adhere to a strict least-privilege design. An agent designed to check invoice status should not hold permissions to approve payments.

Non-human identity considerations are paramount. While an agent often initiates from a human request, it should execute actions under a tightly controlled service identity. This approach enforces accountability and justifies explicit identity lifecycle management for tool calls. If an agent compromises a workflow, you must be able to revoke its specific credentials without disabling the human user’s entire account.

Manage the tool catalog and API boundaries as privileged integrations. If Oracle Fusion AI agents utilize external REST API tools, gate them behind role-based privileges. Define allowed endpoints, methods (GET vs. POST), and data objects for each tool.

Oracle AI Agent Marketplace templates introduce scalability challenges for change control. Since templates can be modified and published, IT leadership needs a standard intake and review path. Establish a clear policy for partner templates: determine which get adopted, which require rewriting for security compliance, and which must be blocked.

Runtime risk controls: Safe actions, integration complexity, and threat-driven guardrails

Security leaders must adopt a threat model anchored in the OWASP LLM Top 10. Prompt injection and insecure output handling move from theoretical risks to practical dangers once an agent can call tools or APIs and trigger business actions.

Controls for Prompt Injection in an Enterprise App Context

Defense begins by minimizing the tool surface area per agent. A finance AI agent should not have access to HR data schemas. Implement strict input controls by validating the user’s request and tool arguments against allowed schemas and object types before execution. If the input does not match the expected structure, the agent must reject the request.

Controls for Insecure Output Handling

Never pass model output directly into executable instructions or API calls without validation and strict allowlists. Output must be treated as untrusted data until verified. For high impact write operations such as supplier setup, payment release, or job changes, mandate a “human approval step.” This acts as a final circuit breaker before the agent commits changes to the database.

Integration Complexity Specific to Fusion Programs

Oracle Fusion AI agents touch multiple systems: the Fusion UI, Fusion APIs, external REST tools, and downstream applications. Treat each boundary as an authorization point and a logging point. Security teams often overlook the lateral movement risk here. If an agent is compromised, it should not be able to pivot from a low-sensitivity task to a high-sensitivity integration.

Scalability Concerns

Governance must work when there are dozens of agents across teams, not just a handful. Hard-coded rules fail at scale. Enterprise AI solutions require policy-driven governance where rules are defined centrally and enforced at the agent runtime level. This guarantees that as your AI agent governance framework expands, your risk exposure remains controlled.

Evidence pack plus ROI scorecard: Measurable controls and measurable value

Governance for Oracle Fusion AI agents requires proving control effectiveness to internal audit and demonstrating value to finance. A vague promise of efficiency fails against the scrutiny of a compliance review. You need an evidence pack that validates your security posture, and an AI Agent ROI scorecard that quantifies the business impact.

The Evidence Pack: What You Can Show in an Audit

The core of your defense is the User and Role Access Audit Report. This report validates function and data security privileges for users or roles, providing the concrete evidence of least privilege required in a Fusion environment. It confirms that an agent’s service identity cannot access data outside its strictly defined scope.

Utilize the Audit Reports work area to track specific actions. This tool records create, update, and delete activity for enabled business objects. Enabling this requires a specific privilege, which itself becomes part of your control story. If an agent modifies a supplier bank account or updates a purchase order, that action must appear here, distinct from human user activity.

Central log collection is non-negotiable. Collecting Fusion audit logs requires a deep understanding of the permission model and integration mechanisms. Refer to the Oracle A-Team Chronicles for reference architectures on extracting these logs to a SIEM or central repository. Without this, you lack a unified view of agent behavior across the enterprise.

Data visibility controls must define the minimum telemetry set per agent.

Every log entry should answer:Who invoked the agent? Which tool was called? Which object was touched? What value changed? This granularity separates a governed AI agent governance framework from a “black box” deployment.

ROI Scorecard Template: Three Metric Families

To justify the investment in Oracle AI Agent Studio and custom development, measuring “time saved” is insufficient. Adopt a three-part scorecard:

1. Cycle Time Saved: Calculate the minutes saved per transaction type multiplied by the monthly volume. If an agent automates invoice matching, measure the reduction in end-to-end processing time, not just the task execution time.

2. Exception and Rework Reduction: Track the decrease in manual corrections and failed validations. Agents should reduce the error rate, creating cleaner downstream data. A drop in “returned to requestor” statuses is a strong indicator of success.

3. Risk Reduction Proxy: Measure the reduction in over-privileged roles and access exceptions. A successful agent deployment often allows you to remove broad permissions from human users, tightening the overall security posture. Faster audit evidence production is also a valid metric here.

Conclusion

Oracle Fusion AI agents can be managed like privileged actors when you treat identity, role design, tool permissions, and audit evidence as first-class requirements. This approach transforms governance from a bottleneck into an enabler of speed.

Take three distinct items to your next architecture review. First, use a strict definition of “agent” versus automation to block agent washing in procurement discussions. Second, adopt a Fusion-native governance checklist that explicitly answers who can build, who can publish, and exactly what data each tool can access. Finally, implement an evidence pack outline and a scorecard that ties security work directly to operational value.

To move forward, pick one high-volume workflow. Implement read-first controls to test the AI agent governance framework safely. Add a mandatory approval step for any write actions and baseline the AI Agent ROI scorecard before scaling further. This method ensures you build a secure foundation for enterprise automation.

FAQs (Frequently Asked Question)

Oracle Fusion AI Agent Governance is a framework for managing the lifecycle, security, and performance of autonomous software agents within the Fusion ecosystem. It focuses on mapping agent identities to Fusion’s Role-Based Access Control (RBAC), defining strict “Builder” vs. “Runner” permissions, and ensuring every agent action—whether reading data or executing transactions—is logged, auditable, and aligned with enterprise security policies.

Security starts with identity. Treat agents as non-human users with specific Duty Roles rather than broad administrative access. Use a “least privilege” model; limit the agent’s scope to only the essential data and tools required for its specific task. Implement “human-in-the-loop” steps for sensitive write actions (like approving payments) and validate all inputs and outputs to prevent prompt injection attacks.

“Agent washing” is a marketing tactic where vendors rebrand standard rule-based automation or basic chatbots as sophisticated “AI agents.” This misleads buyers into expecting autonomous decision-making and learning capabilities that the software does not possess. It creates risk because organizations may apply incorrect governance models, overpay for simple tools, or fail to implement the necessary oversight for what they believe is autonomous software.

Governance is critical because agents can autonomously execute business transactions, such as modifying invoices or changing employee records. Without strict governance, “agent sprawl” can lead to unauthorized data access, operational errors, and compliance failures. A robust governance framework ensures that agents operate within safe boundaries, their actions are traceable for audits, and they deliver measurable business value without introducing unacceptable risk.

Prevent agent washing by enforcing a strict technical definition of an “agent” during procurement and architecture reviews. Require proof that the software can plan steps and execute actions (side effects) rather than just generating text or following a rigid script. Use the ROI scorecard to measure actual autonomy and outcome improvements, ensuring you are paying for genuine intelligence, not just rebranded automation.