Why Most Enterprise AI Pilots Fail, and How Leaders Can Govern ROI from Day One

Section

Key Takeaways

- This blog defines success for Enterprise AI pilots as measurable cost, cycle-time, risk, or revenue outcomes, not pilot completion.

- It explains why AI pilots fail: unclear value, non-AI-ready data, integration gaps, and late risk and cost controls.

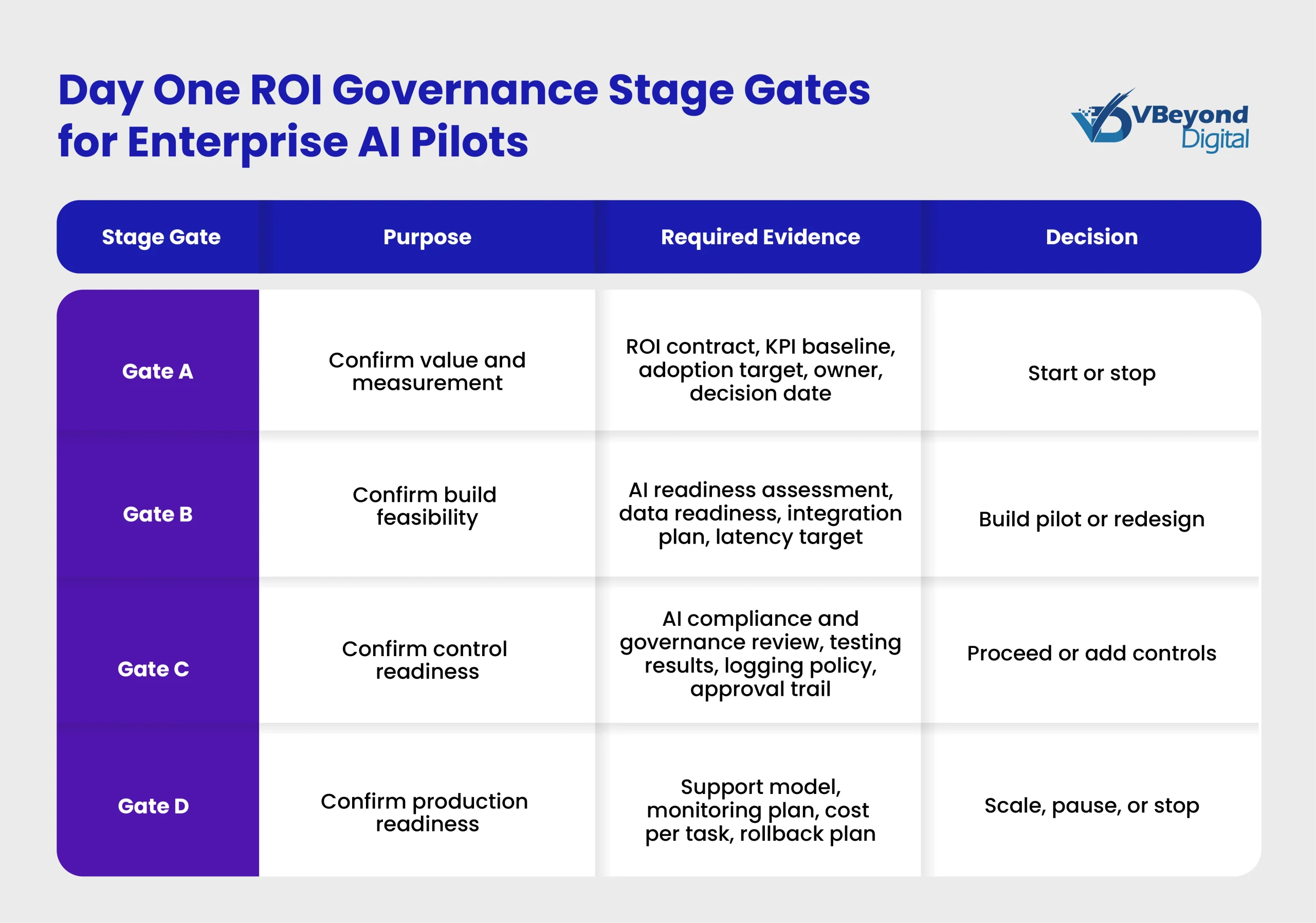

- This blog also talks about the introduction of ROI contract and stage gates as an AI governance framework for start, stop, and scale decisions.

- It explains the connection of AI compliance and governance to NIST AI RMF and adds unit economics to support production decisions.

Introduction

For enterprise AI pilots, “success” is not a demo that impresses stakeholders. Success is a measurable business outcome tied to an operational process: cost reduction in a defined activity; cycle-time reduction in a measurable workflow; risk reduction through stronger controls; or revenue support through higher conversion, retention, or sales productivity. In each case, the pilot needs a baseline, a clear owner, and a measurement plan that can stand up to scrutiny from IT, Finance, and Risk. An AI proof of concept that runs in isolation can still look accurate and useful, while delivering no validated ROI and no viable path to production.

This matters because executive teams are now asking for ROI from generative AI pilots, yet many efforts stall after proof of concept. Gartner previously projected that at least 30% of GenAI projects would be abandoned after proof of concept by the end of 2025 due to poor data quality, inadequate risk controls, escalating costs, or unclear business value.

This blog lays out a Day One approach to reduce AI pilot failure: an ROI contract, stage gates that force decisions, technical readiness checks, and risk and cost controls that support enterprise AI governance from the start.

Why Enterprise AI Pilots stall

Enterprise AI pilots most often stall for a predictable reason: the pilot proves that a model can respond, but it does not prove that the business process changes in a measurable way. That gap creates AI pilot failure patterns that repeat across functions and industries, including generative AI pilots built for knowledge work, customer operations, and internal service desks. Gartner’s public view is direct: many GenAI initiatives do not progress beyond proof of concept because business value, data quality, risk controls, and run costs are not clear early enough to support a production decision.

Failure mode 1: unclear value hypothesis

The early signal is language like “helpful assistant” or “improve productivity,” with no numeric target and no accountable owner.

Without an ROI contract, teams cannot answer basic questions from Finance and operations leaders: what changes, for which users, in which workflow, and by how much? A pilot that lacks a baseline and a decision date becomes a permanent experiment. The result is a growing list of AI proof of concept efforts that consume engineering time, data access cycles, and security reviews, while producing no accepted ROI outcome and no scale path. This is one reason AI projects fail after initial demos, even when stakeholders like the experience.

Failure mode 2: data is not ready for the use case

Enterprise AI pilots break when the data required for a specific workflow is missing or inconsistent. Common symptoms include incomplete metadata, weak lineage, conflicting definitions across systems, limited access pathways, and no monitoring of source changes.

For generative AI pilots, the pain is often visible in retrieval quality: The assistant answers confidently, but cites outdated policies, mixes regions, or cannot trace the source record used. Gartner predicts that through 2026, organizations will abandon 60% of AI projects that are unsupported by AI-ready data, which aligns with what many CIOs see when pilots hit real enterprise constraints.

Failure mode 3: workflow and integration gaps

Many Enterprise AI pilots run in a sandbox and never touch the systems that define “real work.” Production need’s identity and access management (SSO, RBAC), audit logs, ticketing, and integration with core platforms such as Microsoft Dynamics 365 AI, ERP, and data warehouses. Without those pieces, adoption stays low because users must copy and paste between tools, and IT cannot support the solution under normal incident, change-control, and compliance practices.

Failure mode 4: risk and cost show up late

When privacy, security, model behavior, and operating cost are deferred to “phase two,” the pilot can look successful until a release decision forces hard questions:

- What data is exposed in prompts and logs?

- What is the review path for high-impact outputs?

- What is the monthly run-rate at target usage?

Gartner has warned that escalating costs and inadequate controls are frequent reasons GenAI projects stop after proof of concept.

Schedule Your ROI Governance Workshop

Day One ROI governance model

Enterprise AI pilots fail most often when each team runs a separate proof of concept with its own assumptions, data access path, and definition of “value.”

A portfolio approach creates one intake path for AI pilots and one decision cadence. In practice, that means a small cross-functional group (business owner, IT, security, data, and finance) that reviews use cases weekly or biweekly and ranks them on three dimensions:

- Value: Measurable savings, cycle-time reduction, risk reduction, or revenue support tied to a specific workflow

- Feasibility: Data availability, integration complexity, latency constraints, and support readiness

- Risk: Data sensitivity, compliance exposure, model failure impact, and required human review points

This is ROI Governance, meaning leadership can fund fewer Enterprise AI Pilots, measure them consistently, and stop work that cannot reach production.

Risk and cost controls that protect ROI

Enterprise AI pilots often look viable, until risk and cost scrutiny begins, and momentum stalls. The pattern is familiar: teams finish an AI proof of concept, then security flags prompt data exposure, compliance asks for audit trails, and Finance asks for a run-rate forecast that no one prepared. Gartner has warned that inadequate controls, escalating costs, and unclear business value are common drivers of GenAI projects being abandoned after proof of concept.

At VBeyond Digital, we treat AI compliance and governance as part of ROI Governance, not as a separate track. The goal is simple: make risk, cost, and accountability visible from Day One so the ROI contract can survive real enterprise scrutiny.

Risk governance mapped to a recognized structure

A practical AI governance framework needs common language across IT, Risk, Legal, and product teams. The NIST AI Risk Management Framework (AI RMF) organizes work into four functions: Govern, Map, Measure, Manage.

- Govern: Define accountability, policies, and decision rights. For Enterprise AI governance, this is where you set the stage gates, identify approvers, and define what evidence is required before production.

- Map: Document the use case context, stakeholders, and impact. This is where “why AI pilots fail” becomes preventable, because the team writes down who can be harmed by incorrect outputs and what systems the AI touches.

- Measure: Test and evaluate model behavior against defined metrics. This includes task accuracy, retrieval of groundedness, and policy compliance.

- Manage: This is where operational controls, monitoring, and incident response continue after launch, not just during the pilot.

Controls that fit enterprise use

Controls must match the risk profile of the workflow, not generic checklists. For most generative AI pilots that touch enterprise knowledge and systems, the minimum control set typically includes:

- Data classification and access boundaries: Define what content can enter prompts, retrieval indexes, or tool calls, based on confidentiality tier.

- Prompt and output logging rules: Log what is needed for audit and root-cause analysis, while controlling retention and access to logs based on data sensitivity.

- Adversarial and misuse testing: Run red-team style tests for prompt injection, data exfiltration, and unsafe instructions, then track findings like any other security issue.

- Human review points for high-impact actions: If the AI can trigger customer communications, update records, or change approvals, add explicit human review and a clear rollback path.

- Incident playbooks: Define what triggers an incident, who owns triage, how to disable risky capabilities, and how to communicate impacts.

This is the difference between a pilot that “works” and an Enterprise AI pilot that can pass production reviews without rework.

AI unit economics

Even strong model quality can fail in the business case if unit economics are unknown. Track cost as part of the ROI contract and report it at each stage gate:

- Cost per task = Total run cost divided by completed tasks

- Cost per active user = Total run cost divided by weekly active users

- Monthly run-rate = Projected cost at target adoption

Set budgets and alerts early, then compare AI costs to the baseline process cost used in the ROI contract. This keeps generative AI pilots tied to outcomes instead of becoming open-ended spend. It also reduces AI pilot failure risk by forcing early decisions on model choice, retrieval design, and integration scope, based on measurable ROI Governance rather than assumptions.

Conclusion

Enterprise AI pilots fail for reasons that have little to do with whether a model can generate a good response in a demo. AI pilot failure is most common when ROI Governance is missing from Day One, meaning the value hypothesis is vague, the baseline is unknown, the workflow path to production is unclear, and AI compliance and governance controls arrive too late to support a release decision. Gartner has warned that a meaningful share of generative AI pilots is abandoned after proof of concept because poor data quality, inadequate risk controls, escalating costs, or unclear business value surface after the pilot has already consumed time and budget.

For risk governance, map controls to a recognized structure such as the NIST AI RMF functions (Govern, Map, Measure, Manage), so IT, Risk, and product teams can review the same evidence using shared language.

From strategy to build, VBeyond Digital helps tech leaders turn transformation into measurable outcomes. We bring clarity and velocity to your digital initiatives by tying Enterprise AI governance to ROI contracts, stage gates, and unit economics, so AI pilots move from proof of concept to production with less risk.

FAQs (Frequently Asked Question)

Enterprise AI pilots are small-scale, time-boxed pilots that validate an AI use case in business operations, using explicit success criteria and stakeholder feedback before expanding to wider adoption.

Common causes include unclear value, data that is not AI-ready, integration gaps, and late risk and cost controls. Gartner earlier projected that at least 30% of GenAI projects would be abandoned after proof of concept by the end of 2025 for these reasons.

Define a KPI baseline and measurement plan, track adoption, calculate unit economics, then compare task cost and cycle time against the baseline process. Gartner cites unclear business value and escalating costs as abandonment drivers, so set a decision date and exit criteria.

The biggest challenge is moving from sandbox to production operations: identity, access, logging, audit, integrations, support, and policy approvals. Pilots often stall on integration issues and delayed approvals.

AI governance sets decision rights, testing expectations, monitoring duties, and risk reviews. Using a structure like NIST AI RMF helps teams manage AI risks across the lifecycle and makes pilot evidence auditable for production decisions.

Data readiness is use case specific: sufficient coverage, quality thresholds, consistent definitions, lineage, access controls, retention rules, and monitoring. Gartner predicts that through 2026, organizations will abandon 60% of AI projects unsupported by AI-ready data.